There’s this idea going around that AI is the great equalizer. “If everyone gets the same AI, anyone can do everything as good as anyone else.” When I first heard it I couldn’t put a finger on why that felt wrong to me. But I think I can show you why; and as a nature lover I’m only a little sad that it’s a city skyline.

So, this is mine. Or as close as I can get to it without being overly dramatic on the internet.

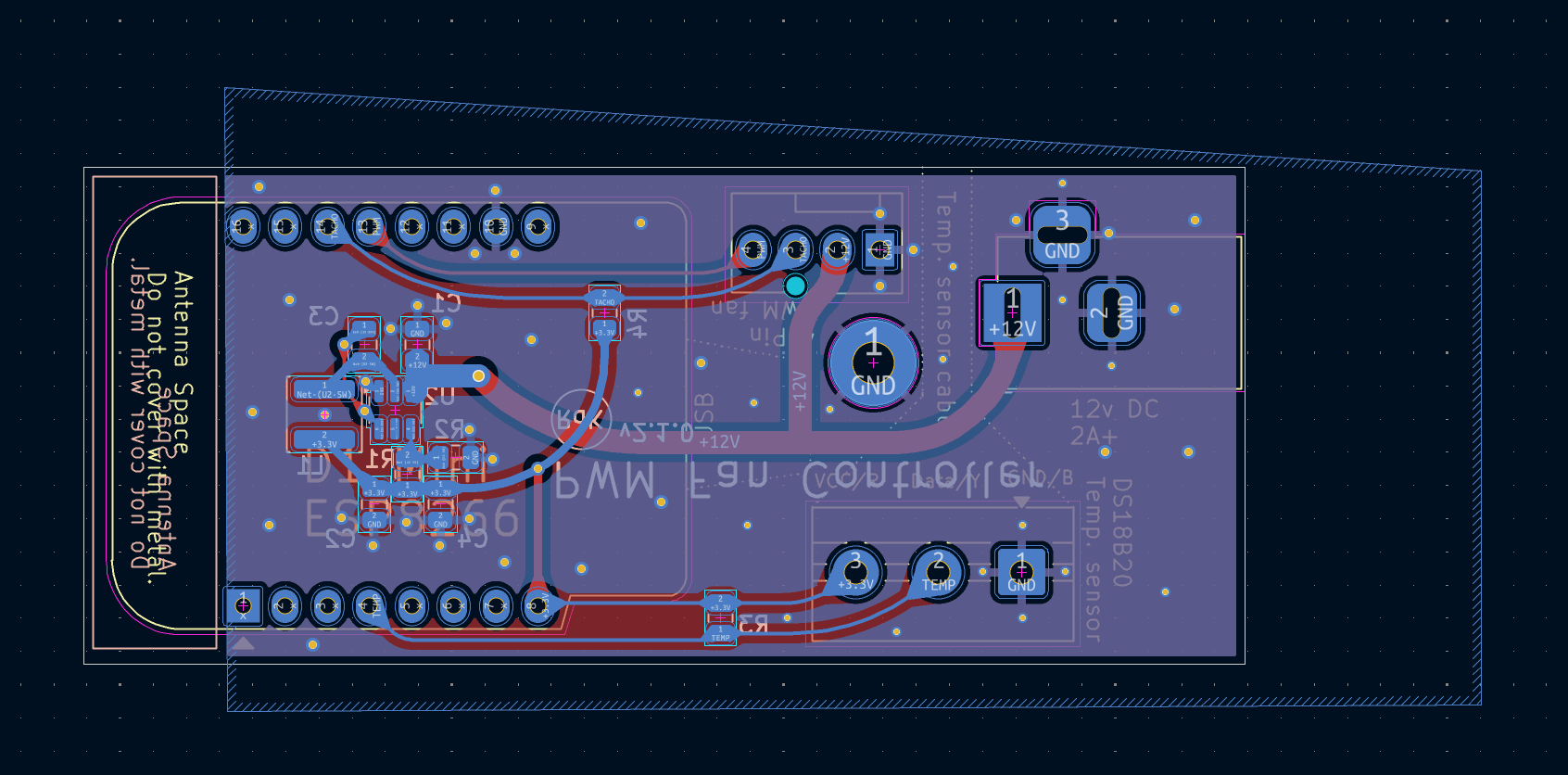

My capability skyline, circa 2024. Note the conspicuous absence of anything hardware-related.

Everyone is good at something; many somethings in fact. Some people of course less than others. Much to my father’s despair I couldn’t kick a soccer ball to save my life. Luckily both my younger brothers compensated handsomely for that, and I’ve always been able to fix his printer and program the VCR. (Damn, I remember when “millennial” meant “young”.) So I get a taller tower there. (Sorry bros, it’s true.) And this is how you can map out anyone.

Me

Middle bro

Youngest bro

My brothers' skylines for comparison. Yes, I put soccer at zero for myself. No, that's not an exaggeration.

But now, the unholy trinity of GPT, Claude and Gemini have given quite a few of my buildings a bump. Higher being just more of the same capability, and wider meaning that I can now do things that used to be adjacent to what I already knew but not really in it. I’m looking at you, Golang.

Same city, 2026. Look at all that glow. Taller in some places; wider in others.

The question is, where does competence stop and capability start? And where, in turn, does that stop?

The four shades of AI-assisted capability, from "I genuinely understand this" to "I am praying."

- Gray: Pre-AI competences. Like seemingly all my podcasts are now prefixed with: “Guaranteed human.”

- Blue: Understood. AI helped you get there, but you understand it now.

- Teal: Reach. It works and you can steer it, but let’s not pretend you could do it yourself.

- And past teal; there are no buildings. Just outer space of hopes and prayers.

Gray: DevOps

This is the easy bit. For me that’s Python, Terraform, Git, etc. All the usual trappings of being a DevOps engineer for far too long. (How’s my writing career coming along?)

Blue: the PCB

Look at what I’ve learned to not be scared of last year:

I made that! I am so proud. Claude helped me understand it, and I most absolutely definitely wouldn’t have ever learned this without AI help. But I made that PCB; it’s a fan controller that talks with Home Assistant. I had a pet peeve with the size of my Speedcomforts for years. So I bought some properly sized fans, tinkered away at this problem for months whenever I had time and inclination and eventually gathered enough courage to press the order button on Aisler; I soldered it up (only knowing the right temperature and equipment thanks to GPT). And it works, and it works great.

And… I now know how PCBs are made. Or rather, I understand the process. Which; to be honest; was more of the point, I guess. My brain just abhors a technological non-understanding.

That’s blue. If every AI disappeared tomorrow I couldn’t make the next PCB as easily, but I’d know where to start. I understand what a trace is, why decoupling caps go where they go, what the difference between SPI and I2C is. That’s mine now.

So is that still AI augmentation? Or should that now just be gray? AI may have helped me grow my competence, but it’s mine now.

Teal: the Android app

I never did any Android development. Yet I’m dictating my notes for this very article into an Android app that I had Claude create after yammering to it for five minutes. It has a dark mode. It syncs to my vault. I added a “blog idea” button last week in about three minutes of conversation. Could I have built that button by reading the Kotlin docs? Probably. Did I? No. My current AI-fueled impatience level does not accommodate that.

That’s teal. The app works, I can steer it, but I don’t own it the way I own my Python coded stuff. If the AI disappears, that app keeps running but it stops evolving.

Unless of course I make that AI help me get that teal blue, and then maybe even that teal gray.

Somewhere in between: Kubernetes on AWS

I’ve had AI generate complete Kubernetes IaC modules for AWS and Azure, which I haven’t worked in for a decade. It made it, I probably couldn’t have; at least not as fast. But I can read every line. I can tell when the module is wrong because I know what a Kubernetes cluster should look like even if I haven’t written the AWS glue in years. I’d have no problem maintaining it if tokens become too precious.

That’s the weird part of the gradient; the bit where blue and teal blur together. I needed help, but I genuinely understand the result. If you asked me to explain it to a junior I could. I just couldn’t have written it from scratch on a Friday afternoon.

The edge of space

Then there’s the border we must never go beyond; the edge of the world where ships drop off; the event horizon beyond which all code spaghettifies into a black blob.

I have been there. I had Claude generate a custom ESPHome component in C++ for that fan controller. It compiled. It ran. The fans spun. And I had absolutely no idea if it was doing what I thought it was doing or just doing something. I stared at it the way you stare at a legal contract; the words are English but the meaning is not landing.

We’ve all done the light version of this; and by we I mean my bubble of developer friends. You make AI do something, and it works. And you don’t check it. Because, well; time pressure, laziness, AI burnout, dehydration, whatever reason you want to dish up. And then a few cycles later it turns out that the damn thing has been serving test data because the model was so incentivized to make it “work” that it concluded that building it for real was a massive nuisance.

That’s annoying and expensive, but hopefully you’ve not gone too far along with it and it’s fixable. But what about a part that you cannot understand; where you can’t judge whether it’s correct. Only that the outcome is probably correct because you’ve seen it work a few times, or worse, Claude told you it worked. That’s not a building. That’s empty sky that somehow looks like a building if you’re not staring straight at it; like an optical illusion.

Knowing your color

So if it quacks like a duck, looks like a duck and walks like a duck; doesn’t the real result make the “competence” real as well? Maybe. But it doesn’t completely work for me; which is why I call it “reach”. The places we can work in now but don’t own.

So here’s a question: what color is the thing you’re building right now? How confident are you that you’ll be able to maintain it? Or are you telling yourself you’re just exploring and pinky promise not to put it in production?

Each of us has a personal skyline, gaudy colors and all. And just like everyone’s un-assisted skyline is different, it’s not like AI flattens out capability for everyone equally. Like the most recent DORA report says: AI is an amplifier. And it amplifies each of us in different ways.

And that’s the thing that makes me feel all warm and fuzzy inside. Remember the great AI flattening? Look at the skylines. Mine has a tall blue PCB tower and a teal Android shed and some empty lots where I pretended there were buildings. Yours would look completely different. Same tools, different cities. Your gray determines your blue, your blue shapes your teal, and your particular flavor of overconfidence determines where the buildings end and the starry sky begins.

Make your own skyline

taller = more of what you already knew. wider = adjacent ground you couldn't reach before.